vRealize Automation 8.x - vRA Not Starting if K8s IP Range is Changed

vRealize Automation (vRA) 8.0 introduced a completely new architecture by running the vRA application itself on top of a Kubernetes (k8s) cluster. Unfortunately this was released with a hardcoded IP address range used by the internal k8s cluster. If your corporate network happened to be using the same IP addresses that were selected by the k8s cluster, then you would likely see plenty of errors in vRA.

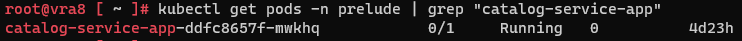

Thankfully, vRealize Automation 8.2 added support for changing the internal IP address range used by the Kubernetes cluster. Unfortunately, there is a bug in the code where only the 10.0.0.0/8 and 127.0.0.0/8 address spaces are supported. If you choose to use the 192.168.0.0/16 address space, the vRA deployment won't start-up. When running the command "kubectl get pods -n prelude", the "catalog-service-app" pod will show as 0/1 Ready, and Running.

1kubectl get pods -n prelude | grep "catalog-service-app"

You can run the "describe" command below and it will show the following error message:

1kubectl describe pod catalog-service-app -n prelude

Readiness probe failed: Get http://192.168.0.162:8000/actuator/health/readiness: dial tcp 192.168.0.162:8000 connect: connection refused

The issue is that the vRA pods are configured to bypass the proxy configuration (even if you haven't set up a proxy server), enabling them to communicate directly between each other. This is set up already for the 10.0.0.0/8 and 127.0.0.0/8 address spaces, but not the 192.168.0.0/16 address space.

To be clear, this is a bug in vRA 8.2 (including Hotfix 1), and VMware should be releasing a patch soon.

To resolve the issue, run the below commands. Log onto each of the appliances in your cluster and change directory.

1cd /opt/python-modules

Edit the proxy_config.py file.

1vi $(find . -name proxy_config.py)

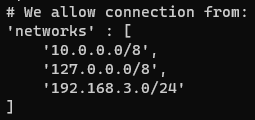

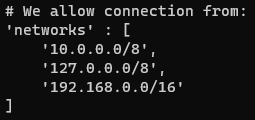

Locate the configuration below, and change the 192.168.3.0/24 line to 192.168.0.0/16

Before

After

Once the file is updated, save and exit the file using ":wq".

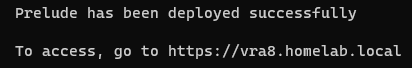

You can now deploy the vRA appliance by running the below command:

1/opt/scripts/deploy.sh